Agentic AI Insurance Claims

Designing the trust layer for AI-driven decisions

Where Trust Breaks (The Problem)

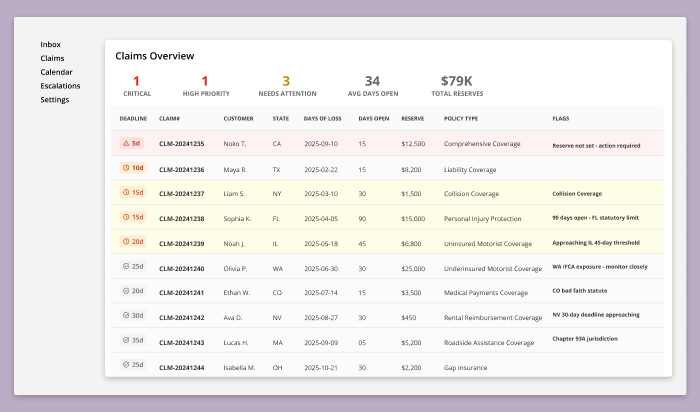

Insurance claims are slow and fragmented. AI improves speed but it also creates a trust gap. Decisions are made instantly but users are left without context, reasoning or control.

Drivers don’t understand decisions

Adjusters don’t trust AI outputs

→ Decisions happen faster than humans can verify them

What Needed to Change (The Goal)

The goal wasn’t automation, it was making AI decisions understandable, accountable, and usable by humans.

Design a claims experience that:

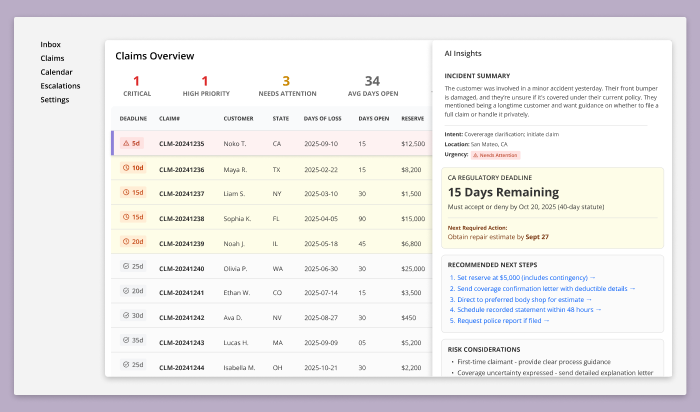

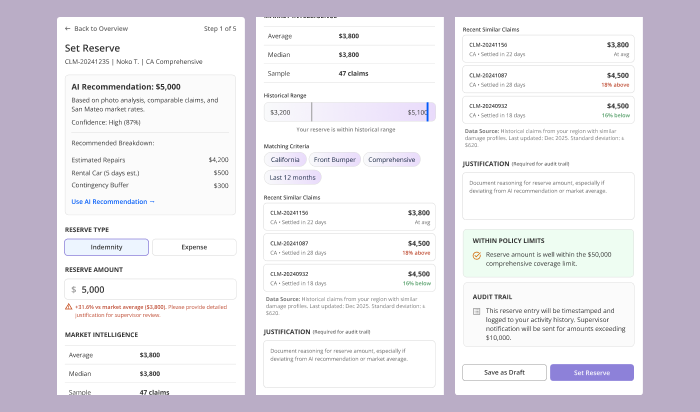

makes AI decisions transparent

keeps adjusters in control

reduces uncertainty for drivers

What Changed

(The Impact)

System Design Moves (What I did)

Exposed the “black box” failure in AI decision-making

Separated driver and adjuster workflows to reflect real-world roles

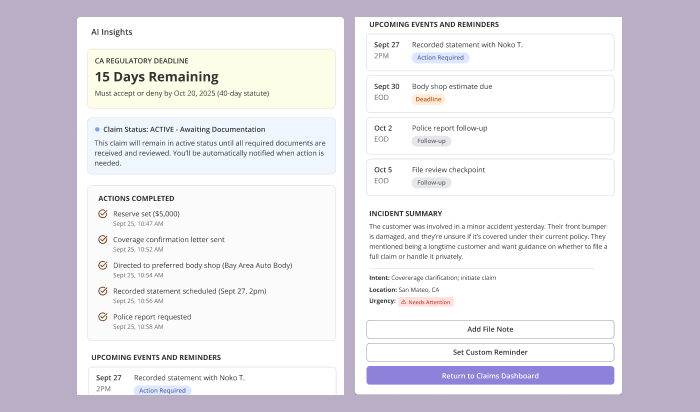

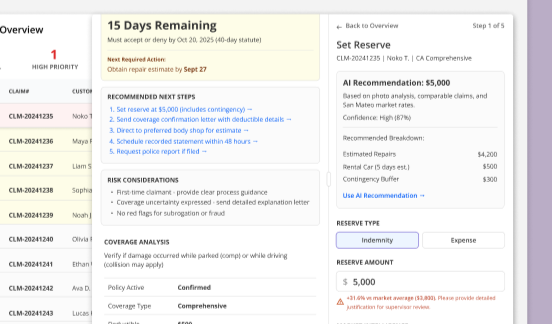

Turned AI outputs into explainable, editable decisions

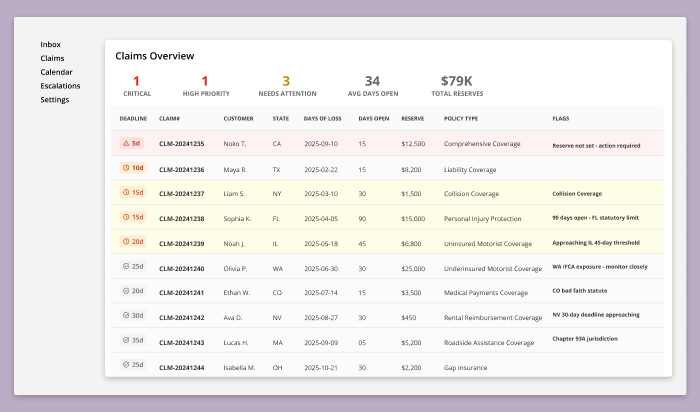

Reframed prioritization around regulatory deadlines, not model confidence

Established trust as a measurable system metric

Reduced adjuster cognitive load by replacing fragmented tools with a unified system

Increased trust in AI decisions by exposing reasoning and confidence

Improved decision clarity by turning AI outputs into explainable, editable inputs

Created a human ↔ AI feedback loop through override and audit mechanisms.